Empirical vs. Analytical Analysis

There has been a long running thread on comp.object based on an argument around the following statement.

>So you assume existence of some ideal and want

>to prove that this is what the program does, by

>means of tests. That's impossible. Period.

I disagree; and the thread was the back and forth argument about that disagreement.

However, I'd like to make a different point. And I'll do it by example. Last night my son was doing some trig homework. I asked to see one of his problems.

Find all x: 0<=x<=360 | 1-sin x = cos(2x)

He wrote: 0, 30, 150, 180, 360.

I checked these answers by calculating them in my head, and then sent him on his way.

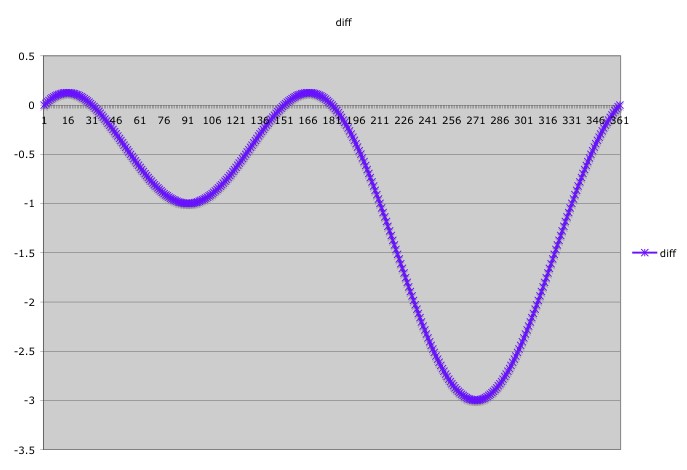

Later I started thinking that he might not have found all the answers. My trig is a bit rusty, and I didn't feel like solving the equations. So instead I set up a spreadsheet that evalutated the difference between 1-sin x, and cos 2x in one degree increments from 0 to 360.

Then I plotted the result. I got a beautiful waveform that crossed the X axis at 0, 30, 150, 180, and 360. Nice.

Does this prove that he was correct? Not quite. From a purely empirical point of view I cannot prove that. My spreadsheet did not check values like 1.5 or 2.63. However, the shape of the waveform told me that there was no chance of that.

So I used an empirical technique, augmented with an analytical evaluation of the waveform. The empirical showed me that there were no other obvious solutions; and the qualitative analysis of the shape of the waveform showed me that there were no non-obvious solutions.

Bringing this back to software, techniques such as TDD are valuable empirical techniques that can create the dots; but you need reasoned analysis to connect those dots. A suite of tests shows you that a program behaves as expected for discrete situations. Analytical reasoning tells you how you can generalize those situations.

!commentForm

There is a very simple analytic solution to this problem.

Hence, sin(x) = 0 OR 2 sin(x) - 1 = 0

Which leads to x = 0, 180, 360; and to x = 30, 150

I much prefer this simple analytic solution because I then know that the solution is correct, and that there are no other possible solutions.

The diff method is generally used for problems that have no analytic solutions.

If the problem had been changed slightly to

the diff method would give only very approximate results, but the analytic method would always work, after solving the resulting quadratic equation.

1 - sin(x) = cos(2x)

or, 1 - sin(x) = 1 - 2 sin(x) * sin(x)

or, 2 sin(x) * sin(x) - sin(x) = 0

or, sin(x) * [ 2sin(x) - 1 ] = 0

Hence, sin(x) = 0 OR 2 sin(x) - 1 = 0

Which leads to x = 0, 180, 360; and to x = 30, 150

I much prefer this simple analytic solution because I then know that the solution is correct, and that there are no other possible solutions.

The diff method is generally used for problems that have no analytic solutions.

If the problem had been changed slightly to

1 - sin(x) = 1.5 cos(2x)

the diff method would give only very approximate results, but the analytic method would always work, after solving the resulting quadratic equation.

- Granted, but that wasn't the problem. All too often in software we solve the general case, when all we've been asked to solve is one specific case. Solving the general case is not always the best approach. In this case my trig identities were rusty, (your first step befuddles me). The empirical approach satisfied me as to the correctness of the answer without forcing me to re-learn trig (I got back to watching TV with my wife in 5 minutes). In software we often face uknowns (rather like my trig identities). The empirical approach (TDD), coupled with sufficient reasoning, can be a good approach to mitigating those unknowns quickly. - UB

Ravi posted a very nice analytical solution. I can't follow it because I've forgotten my trig identities. So I am not sure that it's correct. I just have to trust him. ;-)

I generally try to solve the general case when I can. Give me a quadratic, and I'll drop right to the quadratic formula. Show me an equation, and I'll try to solve it. Indeed, that was my first instinct with this trig relation. But within seconds I realized that I had forgotten the identities that would allow me to do what Ravi did. So I used an empirical approach. I proved to myself that my son's inspection method of solving the problem had, indeed, found all the solutions. So I was done very quickly, and settled back to watch TV with my wife, who had been patiently waiting.

In software I am also always on the lookout for general solutions. However, I also know that general solutions are often expensive, time consuming, and (unfortunately) less general than we think. So I am very careful about adopting "general" solutions.

This is a tightrope. If you get echew analysis in favor of completely empirical evidence, you'll miss all kinds of wonderful simplifying models. On the other hand, if you avoid empirical data, you may create models that can't be built.

So the lesson is to accept both tools and use them both wisely. (It's that last word that's the tricky bit.)

I generally try to solve the general case when I can. Give me a quadratic, and I'll drop right to the quadratic formula. Show me an equation, and I'll try to solve it. Indeed, that was my first instinct with this trig relation. But within seconds I realized that I had forgotten the identities that would allow me to do what Ravi did. So I used an empirical approach. I proved to myself that my son's inspection method of solving the problem had, indeed, found all the solutions. So I was done very quickly, and settled back to watch TV with my wife, who had been patiently waiting.

In software I am also always on the lookout for general solutions. However, I also know that general solutions are often expensive, time consuming, and (unfortunately) less general than we think. So I am very careful about adopting "general" solutions.

This is a tightrope. If you get echew analysis in favor of completely empirical evidence, you'll miss all kinds of wonderful simplifying models. On the other hand, if you avoid empirical data, you may create models that can't be built.

So the lesson is to accept both tools and use them both wisely. (It's that last word that's the tricky bit.)

This is perhaps the best argument for TDD I've seen :)

Having read the thread in question, and I agree with you that you could test all possibilities, even if it is improbable. Although, this does remind me of your post on JustTenMinutesWithoutAtest. Where you discovered that your tests hadn't accounted for a corner case with a particular object. What I wonder, is if an analytical approach to building the tests for said object would have identified it?

Also, he used the identity:

Which is one of the double angle identities

Having read the thread in question, and I agree with you that you could test all possibilities, even if it is improbable. Although, this does remind me of your post on JustTenMinutesWithoutAtest. Where you discovered that your tests hadn't accounted for a corner case with a particular object. What I wonder, is if an analytical approach to building the tests for said object would have identified it?

Also, he used the identity:

Which is one of the double angle identities

I feel that it is good to have both tools (analytical techniques and empirical methods) in your arsenal. The more (and sharper) the types of tools you have in your arsenal, the better a programmer you will become.

The important thing, as Uncle Bob has pointed out, is to know what tool to use in a given situation.

For me, the analytical solution was obvious. In about a minute or two, I had it solved without using pen and paper. So, probably it took less time to get the analytic solution than top verify the result of the inspection technique. ;-)

My limited experience with software development has been that there are very similar problems that occur again and agian in different projects. Often, we fail to recognize the similarites and end up re-doing things. This manifests itself not just across different projects but even within projects when developers create almost identical code in several methods without realizing the common features.

For example, in Java prior to 1.5, I found the use of the Iterator and the subsequent casting inside loops to be a major irritant. Not only is very similar code strewn all over the place, but also you are forced to look into the plumbing of Java, unnecessarily having to learn how Collections work. This prevents you from programming at a high level of abstraction.

The best advice that I have seen for software development is at Peter Norvig's site, where he gives the following advice to develop software. (http://norvig.com/design-patterns/ppframe.htm, Pages 63, 64 and 65):

(1) Start with English description.

(2) Write code from description.

(3) Translate code back to English; compare to (1)

This simple strategy ensures that code is reusable, is done at a high level of abstraction and is easily maintainable.

The important thing, as Uncle Bob has pointed out, is to know what tool to use in a given situation.

For me, the analytical solution was obvious. In about a minute or two, I had it solved without using pen and paper. So, probably it took less time to get the analytic solution than top verify the result of the inspection technique. ;-)

My limited experience with software development has been that there are very similar problems that occur again and agian in different projects. Often, we fail to recognize the similarites and end up re-doing things. This manifests itself not just across different projects but even within projects when developers create almost identical code in several methods without realizing the common features.

For example, in Java prior to 1.5, I found the use of the Iterator and the subsequent casting inside loops to be a major irritant. Not only is very similar code strewn all over the place, but also you are forced to look into the plumbing of Java, unnecessarily having to learn how Collections work. This prevents you from programming at a high level of abstraction.

The best advice that I have seen for software development is at Peter Norvig's site, where he gives the following advice to develop software. (http://norvig.com/design-patterns/ppframe.htm, Pages 63, 64 and 65):

(1) Start with English description.

(2) Write code from description.

(3) Translate code back to English; compare to (1)

This simple strategy ensures that code is reusable, is done at a high level of abstraction and is easily maintainable.

Ravi, I agree that the analytical solution is nice to have to be sure. On the other hand, I find it to be complex enough that I wouldn't fully trust myself having it totally correct. I think I wouldn't trust it if it didn't agree with Robert's graph...

Ilja, for simple problems, either technique suffices.

The trick is to know when to use what.

The more important thing is to have both techniques in your toolkit.

An electrician who comes to my house to fix a problem armed only with a screwdriver and a basec tester is no good. I'd like to see him bring a few tools, just in case the problem required more than a screwdriver to solve.

Likewise, a developer who is aware of several ways of doing things is more valuable than somebody who has one tool in his kit and insists on applying it to all problems.

The analytic solution is simple enough for any 10th grade student to understand. As we grow older, we forget these formulas, and that is normal. But to say that the analytic solution is more complex, or that you wouldn't trust it suggests that one is unwilling to experiment with new things.

Look at the site www.dbdebunk.com where Fabian Pascal keeps bemoaning the anti-intellectual nature of Western society in general, and US society in particular.

The trick is to know when to use what.

The more important thing is to have both techniques in your toolkit.

An electrician who comes to my house to fix a problem armed only with a screwdriver and a basec tester is no good. I'd like to see him bring a few tools, just in case the problem required more than a screwdriver to solve.

Likewise, a developer who is aware of several ways of doing things is more valuable than somebody who has one tool in his kit and insists on applying it to all problems.

The analytic solution is simple enough for any 10th grade student to understand. As we grow older, we forget these formulas, and that is normal. But to say that the analytic solution is more complex, or that you wouldn't trust it suggests that one is unwilling to experiment with new things.

Look at the site www.dbdebunk.com where Fabian Pascal keeps bemoaning the anti-intellectual nature of Western society in general, and US society in particular.

I absolutely agree that both techniques need to be in our toolkit. The other thing we need to be able to do is know when to use which technique. Ilja made a great point. He said he wasn't sure that the derivation of the math was right, and used the empirical data to mitigate his fear. My situation was even worse, I had forgotten the critical identity that made the derivation possible. So both of us had a choice to make.

So I don't view the empirical solution as a retreat into anti-intellectualism. I see it as an economic tradeoff.

- Re-learn the identities (and understand them), and then finish the analysis

- Rely on what analysis we could do, bolstered by empirical data.

So I don't view the empirical solution as a retreat into anti-intellectualism. I see it as an economic tradeoff.

If I remember correctly, the four color theorem was finally proved through a combination of analytical operations, and a rather large, computer assisted, enumeration. I remember reading that some mathematicians had a hard time thinking of an empirical enumeration as a proof.

And yet the computer assisted proof still stands. So it appears that even the world of topology and mathematics must sometimes bow to empirical proofs.

- "However, because part of the proof consisted of an exhaustive analysis of many discrete cases by a computer, some mathematicians do not accept it." from http://mathworld.wolfram.com/Four-ColorTheorem.html.

And yet the computer assisted proof still stands. So it appears that even the world of topology and mathematics must sometimes bow to empirical proofs.

Math is a playful subject. Mathematicians mess around with things as they experiment and discover. Then it gets time to write up the results for other people. You need to figure out the formal path through the argument and often the need for rigor replaces providing the intuitive step that led you to the result in the first place. Now that you've written your draft of the result for publication, you realize it is too wordy. As one of my profs told me, now you cross out every other sentence and many of the intermediate calculations. Sure they make your argument easier to follow, but to the technical audience they are unnecessary.

Now we have a result that we release into the world and someone writes a book that includes the result and someone else reads the book and writes it on a chalkboard somewhere in front of a class of people too young to listen attentively all the time. They cram for the test remembering half-angle formulas for trig functions and clever substitutions for proving trig identities. Maybe they go on to Calculus where they have to use these techniques to calculate integrals or in Physics for resolving vectors.

Years go by and their child comes to them with a problem. What I love about Bob's story at this point was (1) he was able to check that all of his son's answers were correct and (2) he checked that his son had found all of the correct answers and (3) his method for checking (2) could be fairly rough. Many people never get to (2) - not in math and certainly not in life. They have an answer or answers that work and don't stop to think about whether or not there are other right answers. I found (3) delightful.

Years ago I came to XP particularly because the practices resonated with me as a former Math teacher. Some teachers might see (3) as cheating - I'd love to see a student do (3) and then solve the problem to get there. It stays with them longer. They aren't just solving a bunch of goo. Also, it is not a trivial jump to graph it this way. Many students would graph y = 1-sin x and y = cos 2x on the same axes and try to figure out where they intersect. Nothing wrong with that. That would show one function wiggling twice as often as the other which might explain the cluster of answers. This picture makes it easier to locate the solutions to the problem.

Thanks for the write-up Bob,

D

Now we have a result that we release into the world and someone writes a book that includes the result and someone else reads the book and writes it on a chalkboard somewhere in front of a class of people too young to listen attentively all the time. They cram for the test remembering half-angle formulas for trig functions and clever substitutions for proving trig identities. Maybe they go on to Calculus where they have to use these techniques to calculate integrals or in Physics for resolving vectors.

Years go by and their child comes to them with a problem. What I love about Bob's story at this point was (1) he was able to check that all of his son's answers were correct and (2) he checked that his son had found all of the correct answers and (3) his method for checking (2) could be fairly rough. Many people never get to (2) - not in math and certainly not in life. They have an answer or answers that work and don't stop to think about whether or not there are other right answers. I found (3) delightful.

Years ago I came to XP particularly because the practices resonated with me as a former Math teacher. Some teachers might see (3) as cheating - I'd love to see a student do (3) and then solve the problem to get there. It stays with them longer. They aren't just solving a bunch of goo. Also, it is not a trivial jump to graph it this way. Many students would graph y = 1-sin x and y = cos 2x on the same axes and try to figure out where they intersect. Nothing wrong with that. That would show one function wiggling twice as often as the other which might explain the cluster of answers. This picture makes it easier to locate the solutions to the problem.

Thanks for the write-up Bob,

D

Add Child Page to EmpiricalVsAnalyticalAnalysis